|

Dongseok Shim

I am a Research Scientist at Creative AI Lab, Sony Group Corporation in Tokyo, Japan.

I received my Ph.D. from the Lab for Autonomous Robotics Research (LARR) at Seoul National University, advised by Prof. H. Jin Kim.

Previously, I was a Research Intern with the Seed Vision at ByteDance USA. |

|

Experience |

|

Sony Group Corporation, Tokyo, Japan

Research Scientist, Creative AI Lab Apr. 2025 – Present Working on multimodal generative models including video-to-audio generation and multimodal human motion generation. |

|

ByteDance (TikTok), San Jose, CA, USA

Research Intern, Seed Vision Apr. 2024 – Sep. 2024 Mentors: Yichun Shi, Peng Wang Working on relightable text-to-3D generative models. |

Education |

| 2020 – 2025 | Ph.D. in Artificial Intelligence, Seoul National University, Seoul, Korea |

| 2016 – 2020 | B.S. in Mechanical Engineering, Seoul National University, Seoul, Korea |

Publications |

|

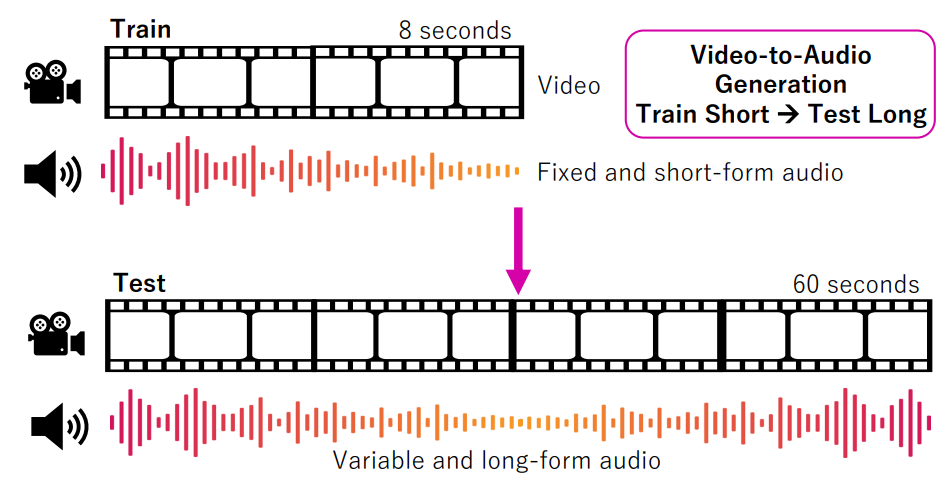

Echoes Over Time: Unlocking Length Generalization in Video-to-Audio Generation Models

Christian Simon, Masato Ishii, Wei-Yao Wang, Koichi Saito, Akio Hayakawa, Dongseok Shim, Zhi Zong, Shuyang Cui, Shusuke Takahashi, Takashi Shibuya, Yuki Mitsufuji CVPR, 2026 project page | arXiv A multimodal hierarchical network (MMHNet) that enables scalable video-to-audio generation, achieving long-form synthesis by training on short instances and generalizing to extended durations. |

|

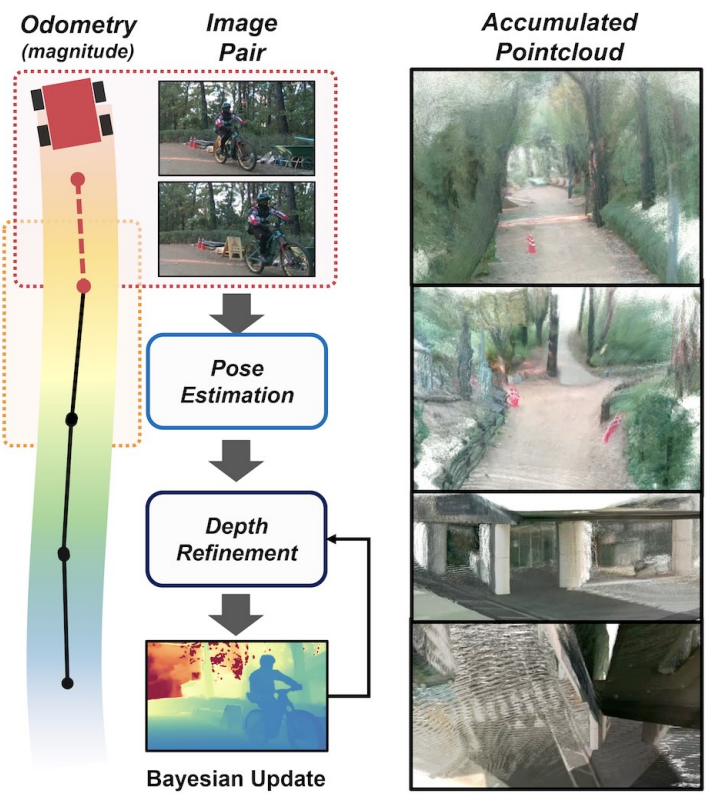

PTC-Depth: Pose-Refined Monocular Depth Estimation with Temporal Consistency

Leezy Han, Seunggyu Kim, Dongseok Shim, Hyeonbeom Lee CVPR, 2026 project page | arXiv A consistency-aware monocular depth estimation framework that leverages wheel odometry and optical flow to produce temporally stable and accurate depth predictions. |

|

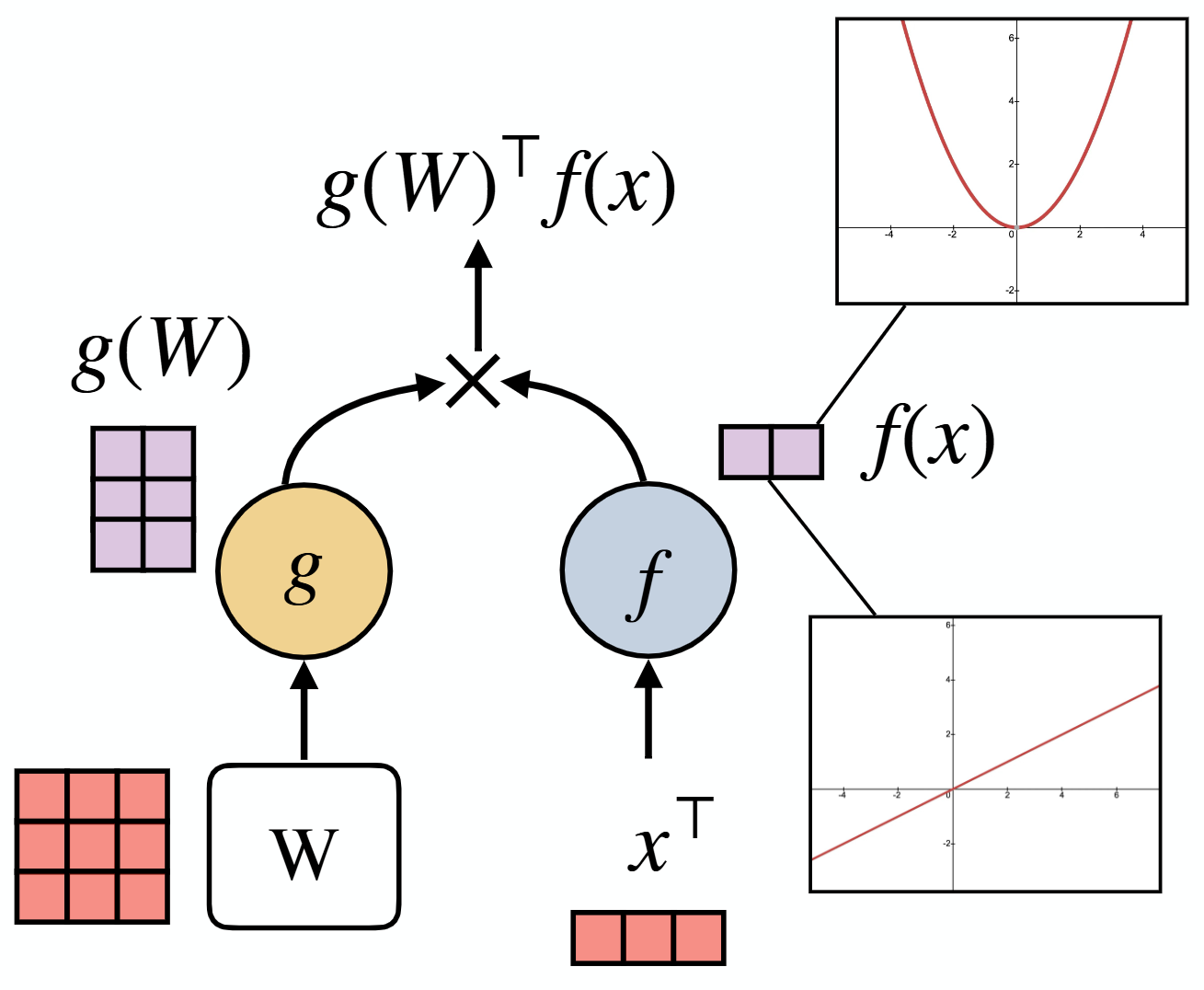

EUGens: Efficient, Unified and General Dense Layers

SangMin Kim, Byeongchan Kim, Arijit Sehanobish, Somnath Basu Roy Chowdhury, Rahul Kidambi, Dongseok Shim, Avinava Dubey, Snigdha Chaturvedi, Min-hwan Oh, Krzysztof Choromanski NeurIPS, 2025 arXiv A unified and efficient dense layer that approximates fully connected feedforward layers with linear-time complexity, enabling scalable and resource-efficient neural networks. |

|

|

Periodic Skill Discovery

Jonghae Park, Daesol Cho, Jusuk Lee, Dongseok Shim, Inkyu Jang, H. Jin Kim NeurIPS, 2025 project page | arXiv | code An unsupervised reinforcement learning framework that encodes states in a circular latent space to discover diverse periodic behaviors for locomotion. |

|

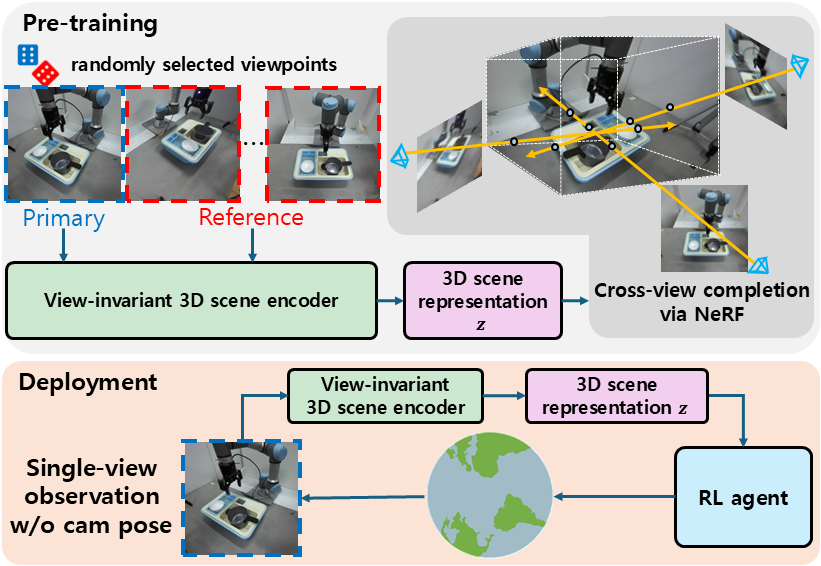

Single-view 3D-aware representations for reinforcement learning by cross-view neural radiance fields

Daesol Cho1, Seungyeon Yoo1, Dongseok Shim, H. Jin Kim IEEE Rototics and Automation Letters (RA-L), 2025 project page | paper | code A reinforcement learning framework that learns 3D-aware representations from single-view RGB via masked ViT and NeRF, enabling viewpoint-robust robot manipulation without multi-view supervision. |

|

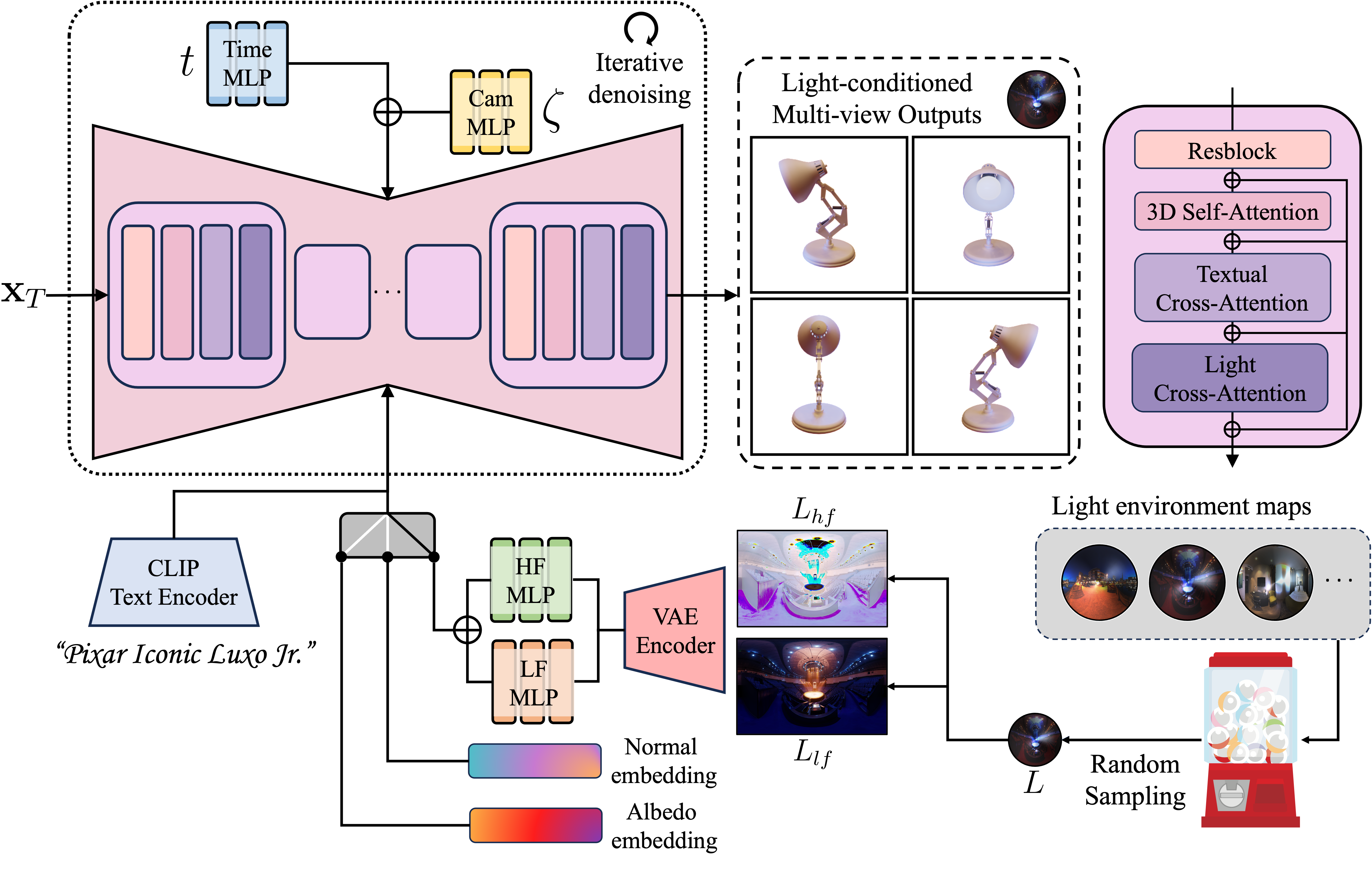

MVLight: Relightable Text-to-3D Generation via Light-conditioned Multi-View Diffusion

Dongseok Shim, Yichun Shi, Keijie Li, H. Jin Kim, Peng Wang arXiv, 2024 arXiv A light-conditioned multi-view diffusion model, improves text-to-3D generation by disentangling lighting-dependent and invariant components for enhanced relighting and geometry. |

|

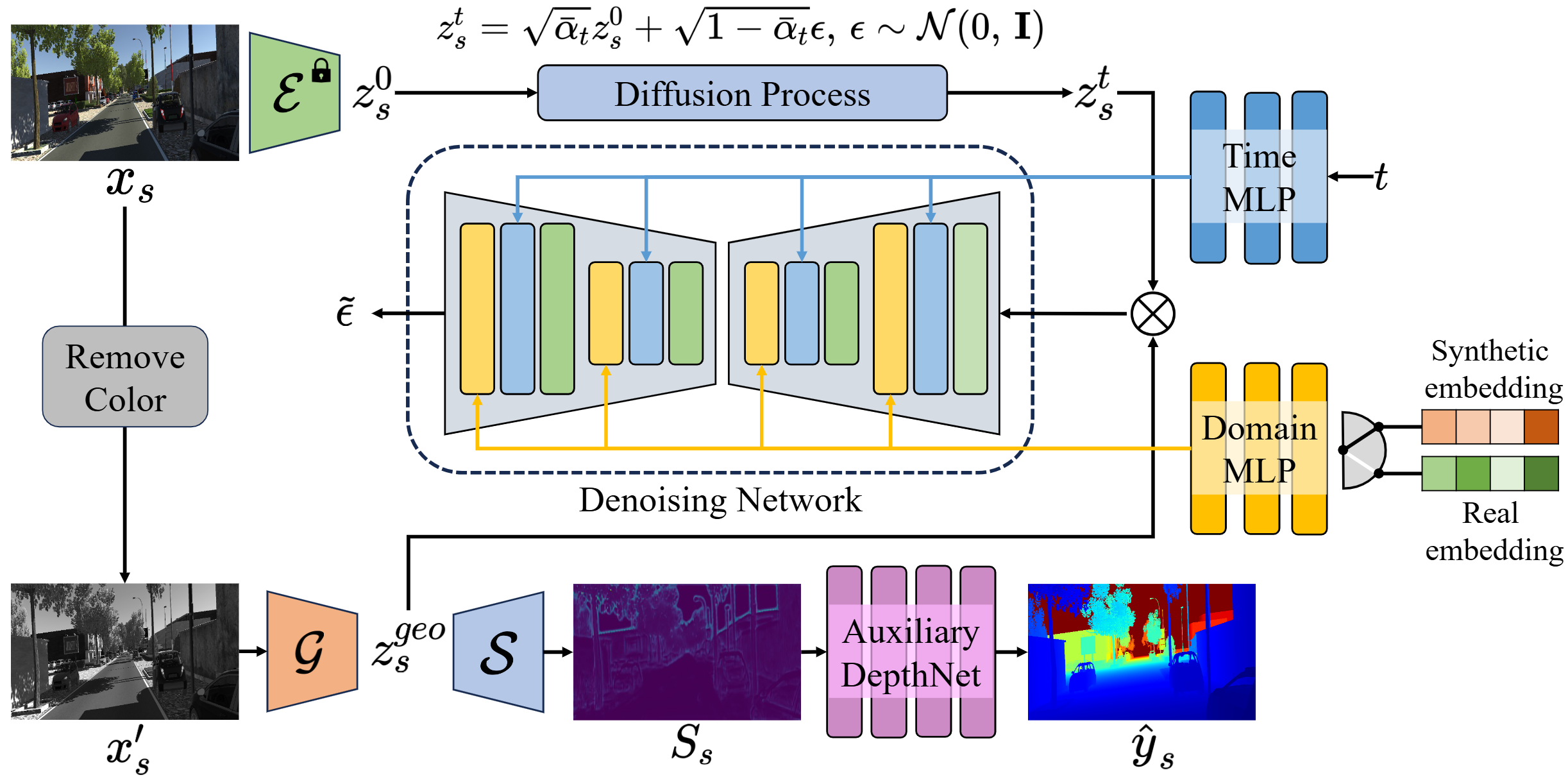

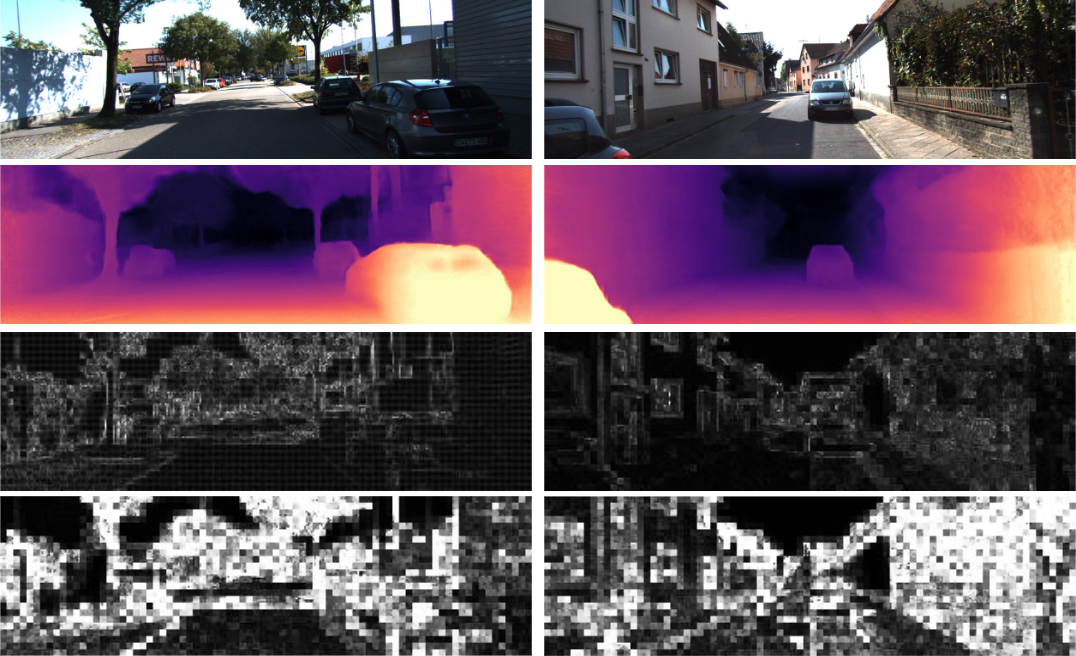

SEDiff: Structure Extraction for Domain Adaptive Depth Estimation via Denoising Diffusion Models

Dongseok Shim, H. Jin Kim ECCV, 2024 A latent diffusion-based framework that removes domain-specific components and preserves structural consistency for domain-adaptive monocular depth estimation. |

|

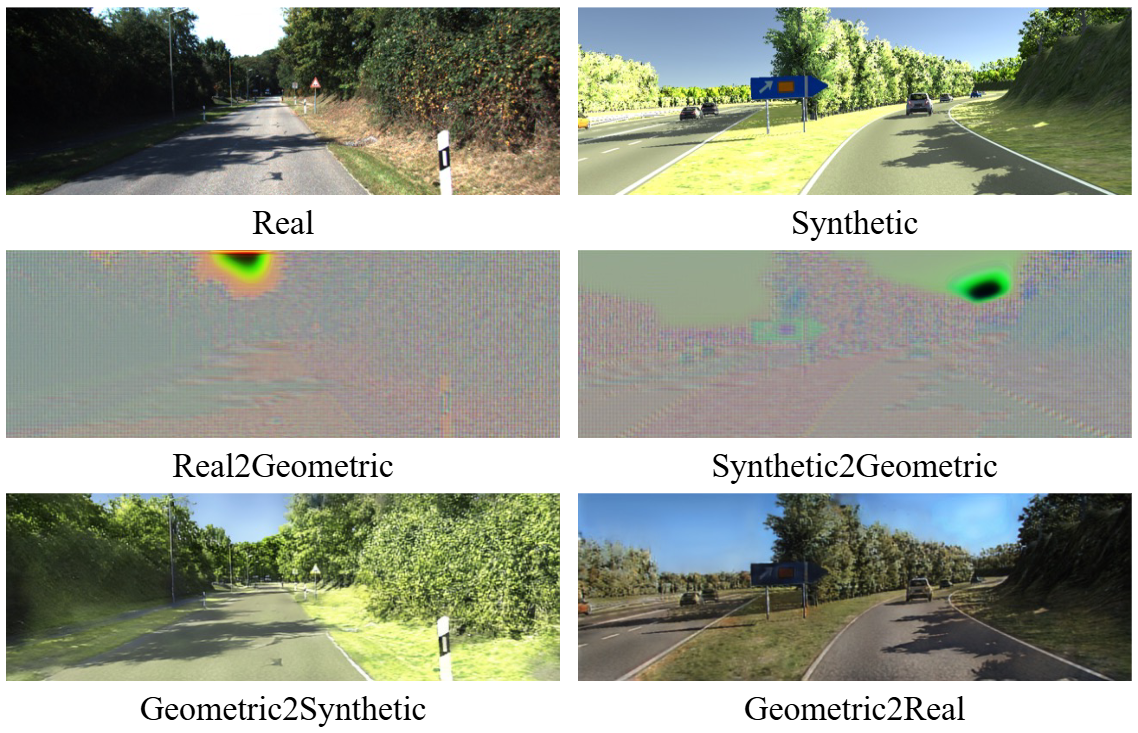

DIVIDE: Learning a Domain-Invariant Geometric Space for Depth Estimation

Dongseok Shim, H. Jin Kim IEEE Rototics and Automation Letters (RA-L), 2024 paper A depth estimation framework that learns domain-invariant geometric representations by disentangling domain-specific components with Gram matrix representations. |

|

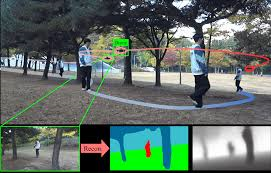

Mono-camera-only target chasing for a drone in a dense environment by cross-modal learning

Seungyeon Yoo1, Seungwoo Jung1, Yunwoo Lee, Dongseok Shim, H. Jin Kim IEEE Rototics and Automation Letters (RA-L), 2024 project page | paper | video Learning unified cross-modal representations from RGB, depth, and semantic inputs for enhanced drone-based target tracking. |

|

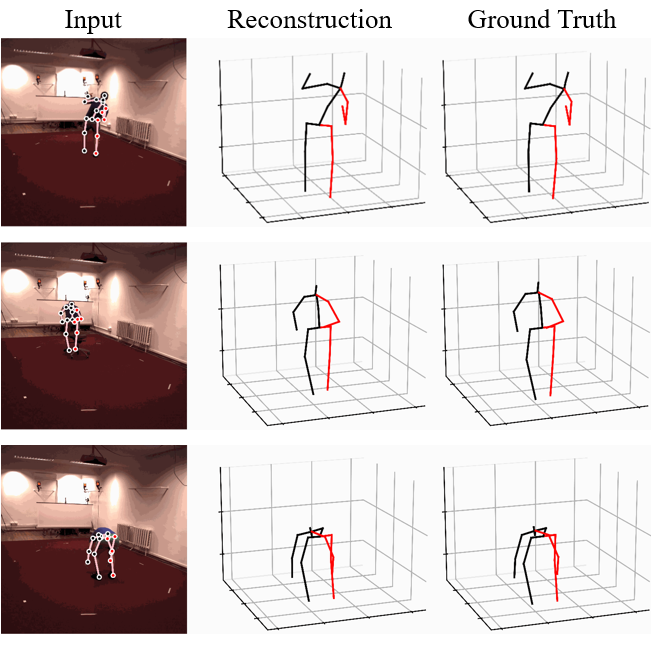

Diffupose: Monocular 3D human pose estimation via denoising diffusion probabilistic model

Jeongjun Choi1, Dongseok Shim1, H. Jin Kim IROS, 2023 arXiv | code A first diffusion-based framework for monocular 3D human pose estimation that generates diverse 3D pose hypotheses from a single 2D keypoint input with GCN. |

|

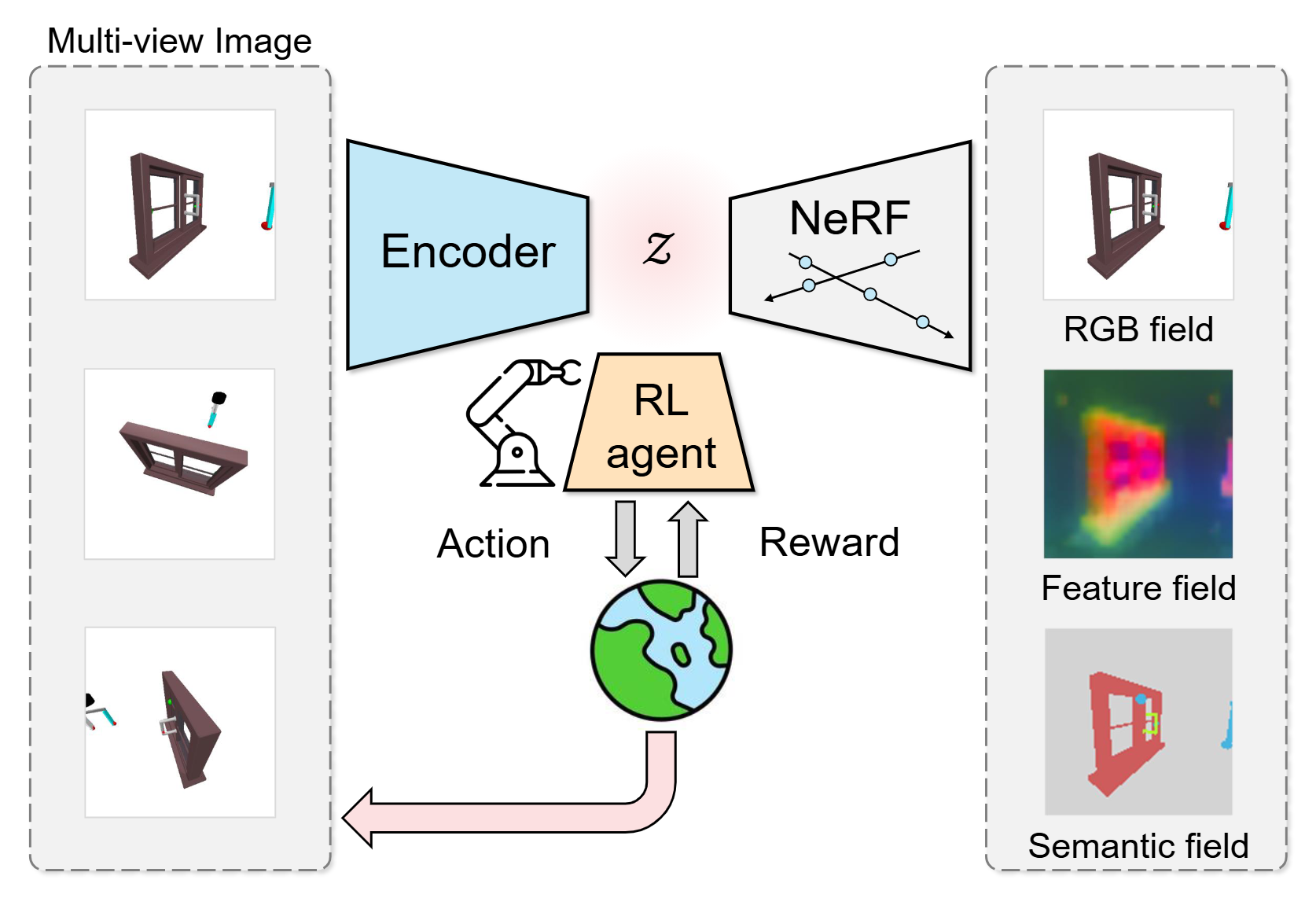

SNeRL: Semantic-aware Neural Radiance Fields for Reinforcement Learning

Dongseok Shim1, Seungjae Lee1, H. Jin Kim ICML, 2023 arXiv | code A semantic-aware NeRF-based framework, jointly learns 3D-aware and object-centric representations for improved reinforcement learning performance. |

|

SwinDepth: Unsupervised Depth Estimation using Monocular Sequences via Swin Transformer and Densely Cascaded Network

Dongseok Shim, H. Jin Kim ICRA, 2023 arXiv | code Swin Transformer-based encoder and densely cascaded decoder architecture for unsupervised depth estimation using monocular sequences. |

|

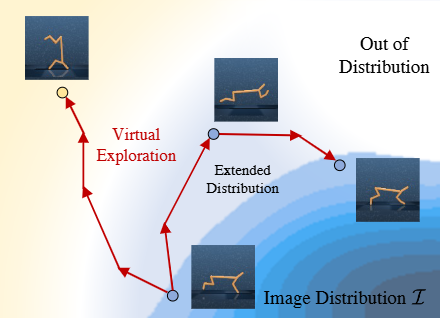

S2P: State-conditioned Image Synthesis for Data Augmentation in Offline Reinforcement Learning

Daesol Cho1, Dongseok Shim1, H. Jin Kim NeurIPS, 2022 arXiv | code A generative state-to-image framework that bridges state and image domains to improve generalization in image-based offline reinforcement learning |

|

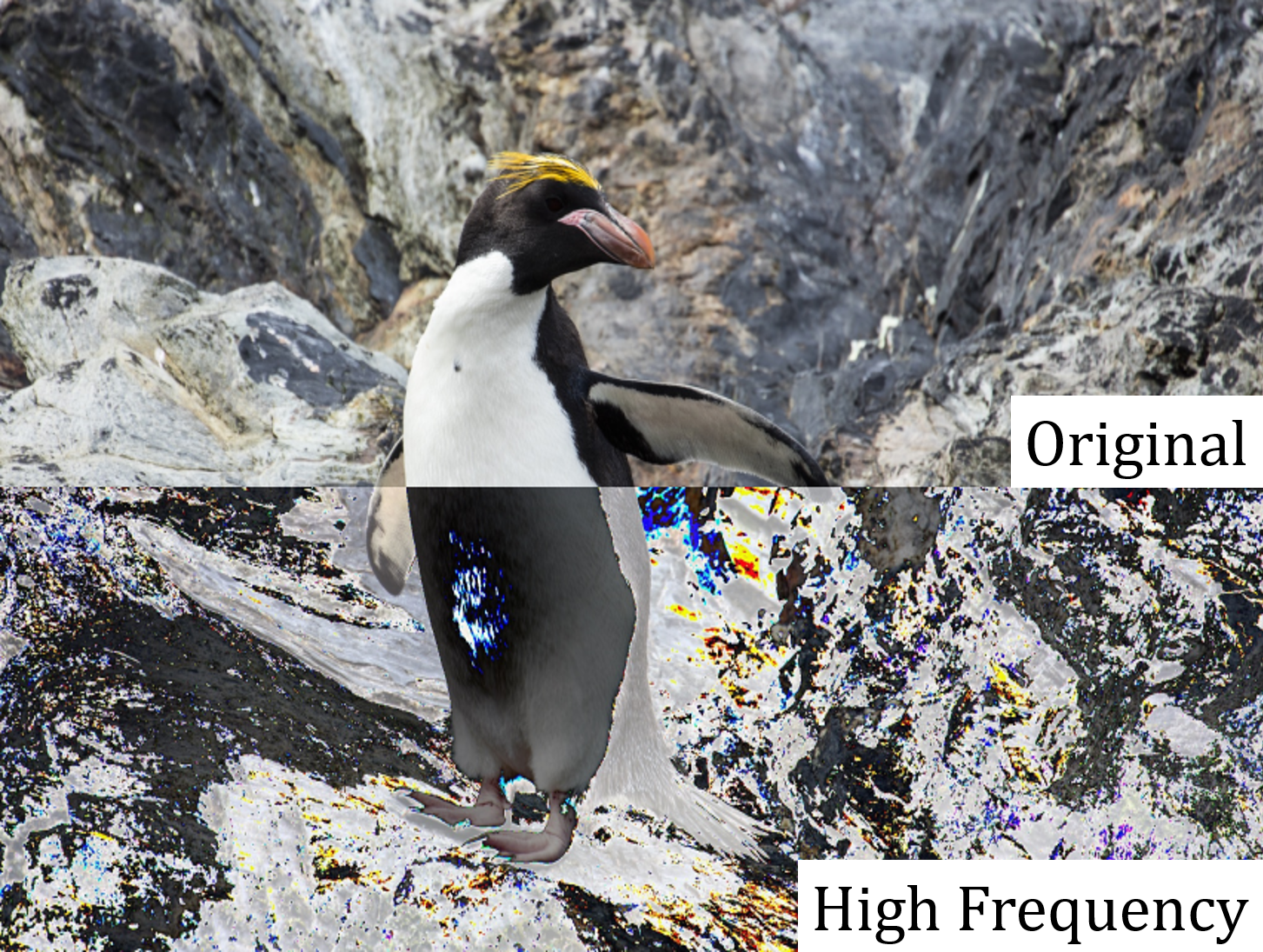

FS-NCSR: Increasing Diversity of the Super-Resolution Space via Frequency Separation and Noise-Conditioned Normalizing Flow

Ki-Ung Song1, Dongseok Shim1, Kang-wook Kim1, Jae-young Lee, Younggeun Kim, CVPR NTIRE Workshop, 2022 arXiv | code A frequency-separated, normalizing flow (NF)-based super-resolution framework that generates diverse and high-quality outputs by modeling high-frequency details. |

|

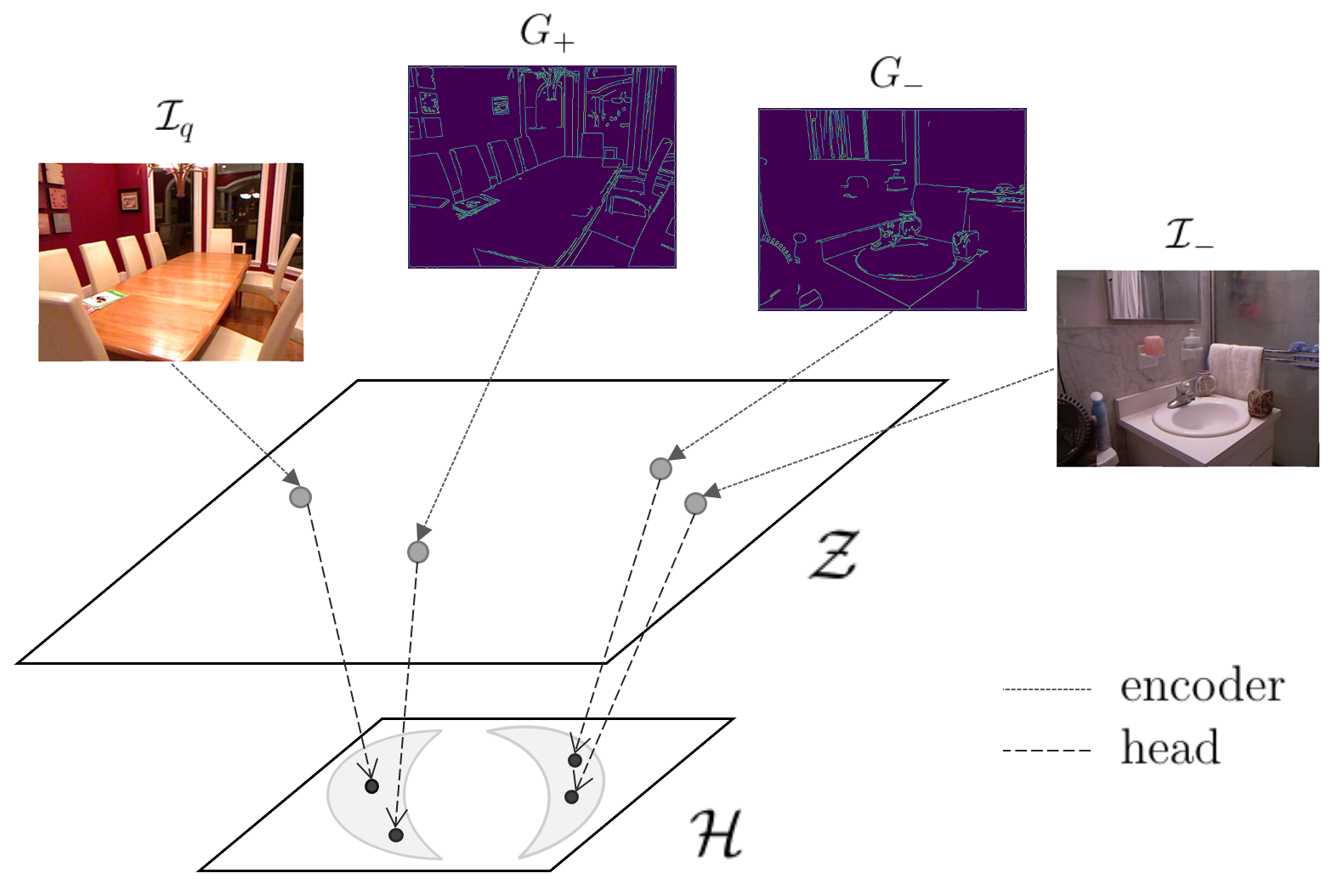

Learning a Geometric Representation for Data-Efficient Depth Estimation via Gradient Field and Contrastive Loss

Dongseok Shim, H. Jin Kim ICRA, 2021 arXiv | code A self-supervised learning approach for monocular depth estimation that leverages gradient-based representations and momentum contrastive loss to capture geometric information. |

Honors and Awards |

| 2025 | Best Ph.D. Dissertation Award (Honorable Mention), Graduate School of Artificial Intelligence, Seoul National University |

| 2022 | Runner-up at NTIRE 2022 Challenge on Learning the Super-resolution Space |

Academic Service |

| Reviewer: CVPR, ICCV, ECCV, ICLR, NeurIPS, AAAI, ICML, ICRA, IROS, 3DV, etc. |

|

This website is built with Jon Barron's source code. |